By Cliff Potts

Editor-in-Chief, WPS News

Published: Friday, February 27, 2026

Time: 12:30 PM

The Fantasy of Technological Rescue

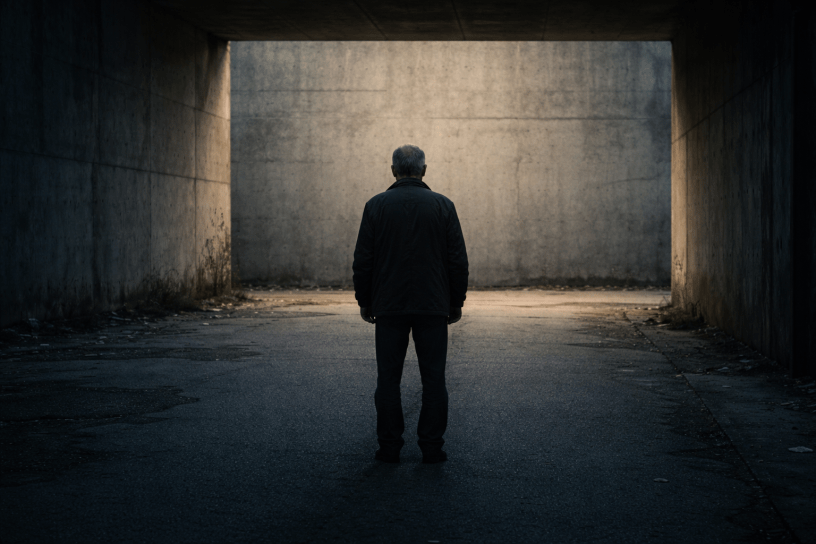

There is a quiet fantasy embedded in modern tech culture: that intelligence—especially artificial intelligence—can rescue anyone. That enough insight, enough pattern recognition, enough “help” can compensate for decades of structural harm. This fantasy is comforting. It suggests that no one is ever truly lost, that a sufficiently advanced tool can reverse the damage done by poverty, neglect, violence, exclusion, or long-term social abandonment.

It is also false.

AI systems like ChatGPT are not designed to undo systemic damage accumulated over a lifetime. They cannot repair what society deliberately allowed to break. Expecting them to do so misunderstands both technology and harm.

Damage Is Not a Skills Deficit

Long-term systemic damage is not a skills deficit. It is not a missing checklist. It is not a lack of motivation, intelligence, or effort. It is the cumulative effect of structures working exactly as designed—education systems that sort instead of nurture, labor markets that discard instead of retrain, healthcare systems that ration dignity, and social narratives that blame individuals for outcomes engineered long before they had agency.

By the time someone reaches the later stages of life carrying that damage, the problem is not information. It is time. It is attrition. It is the narrowing of options after decades of constrained choice. AI can explain paths that once existed; it cannot reopen doors that closed twenty or forty years ago.

Human Lives Are Not Reversible Systems

Software operates on the assumption of reversibility. Data can be reprocessed. Errors can be corrected. Systems can be optimized. Human lives subjected to prolonged systemic harm are not reversible systems. They are entropy stories.

Energy leaks out over time—health declines, networks vanish, credibility erodes, resilience is consumed just surviving. No algorithm restores lost decades, lost compounding, or lost trust.

This is where the rhetoric around “empowerment” becomes cruel.

The Violence of False Empowerment

When society suggests that a person damaged from birth should be able to “fix” their life with the right tools—AI included—it quietly absolves itself. The burden shifts from collective responsibility to individual failure. If the software exists and the person still struggles, the implication is moral: you didn’t use it well enough. You didn’t adapt. You didn’t try hard enough.

That narrative is not just wrong; it is violent.

AI does not live inside the constraints it advises around. It does not age. It does not carry a medical history. It does not accumulate rejection letters, criminal records, employment gaps, or invisible reputational scars. It does not experience the exhaustion of having already climbed the same hill ten times and slid back down each time because the ground itself was unstable.

What AI Can—and Cannot—Do

At best, AI can help someone articulate their situation. It can offer language, reflection, structure, and clarity. It can witness. It can organize thoughts that were previously scattered. It can reduce friction at the margins.

These are not nothing—but they are not salvation.

What AI cannot do is compensate for a society that wrote off certain lives early and never meaningfully re-invested in them. It cannot supply missing capital, missing years, missing health, or missing institutional forgiveness. It cannot override ageism, classism, or the market logic that treats human beings as depreciating assets.

Structural Guilt and Technological Denial

The expectation that technology should repair irreparable harm is a symptom of a deeper refusal to confront structural guilt.

We want tools to fix people because fixing systems is expensive, politically dangerous, and morally uncomfortable. It requires admitting that some lives were, in fact, damaged beyond repair by policy, culture, and neglect—and that no amount of “personal growth” rhetoric will change that.

This does not mean people are worthless. It means the damage was real.

A Mirror, Not a Redeemer

AI is a mirror, not a redeemer. It reflects the world that built it. If the world has no path for late-stage recovery from lifelong harm, the machine will not invent one. Expecting it to do so is not optimism; it is denial.

The tragedy is not that AI fails to save these lives. The tragedy is that society wants a machine to do the saving so it doesn’t have to admit it already failed.

Discover more from WPS News

Subscribe to get the latest posts sent to your email.